In a world increasingly shaped by data, organizations rely on strong data pipelines to convert raw information into meaningful insights. One of the most important processes behind this transformation is ETL — Extract, Transform, and Load. ETL gathers data from multiple sources, reshapes it into useful formats, and delivers it to analytics platforms or data warehouses.

As data volumes grow, however, inefficient ETL pipelines can become slow, costly, and difficult to maintain. This is why optimizing ETL processes has become a critical focus for modern data engineering teams.

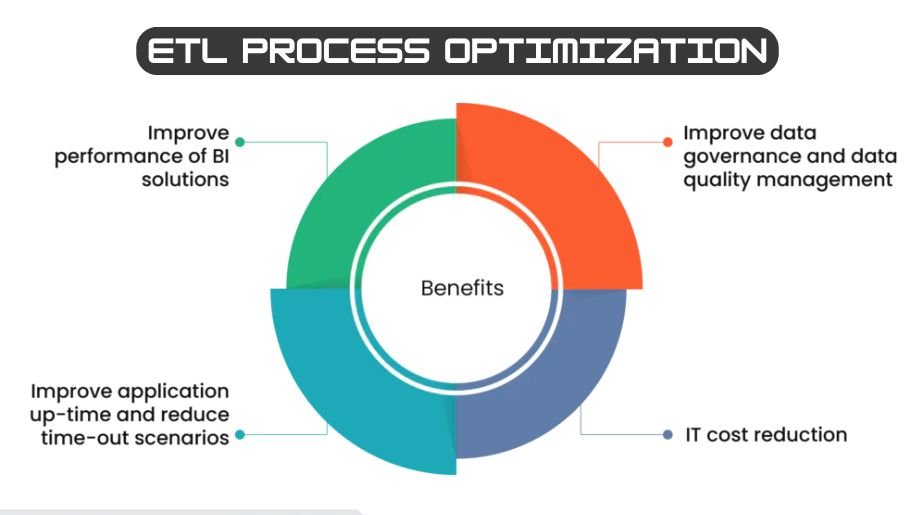

ETL optimization involves improving the speed, scalability, reliability, and resource efficiency of data workflows. Rather than simply transferring data, optimized pipelines aim to reduce processing delays, control infrastructure costs, and maintain consistent data quality. When done effectively, optimization accelerates analytics while strengthening overall system performance.

Understanding the Three Stages of ETL

Before exploring optimization strategies, it helps to revisit the main stages of ETL.

Extraction is the process of pulling data from various sources such as databases, APIs, or files.

Transformation focuses on preparing the data — cleaning it, filtering it, restructuring it, and applying business logic.

Loading transfers the processed data into a destination system like a data warehouse or analytics environment.

Each stage can introduce bottlenecks. Slow queries can delay extraction, complex calculations can overwhelm transformation processes, and inefficient database operations can slow down loading. Effective optimization requires examining each stage individually while also considering the pipeline’s overall architecture.

Why ETL Process Optimization Is Essential

Modern businesses generate enormous volumes of both structured and unstructured data. Without optimization, pipelines often struggle with long runtimes, rising infrastructure expenses, and limited scalability. Slow data processing can result in outdated reports and delayed decision-making.

Optimized pipelines reduce latency, enabling teams to work with fresher data and respond more quickly to changes. They also increase reliability by preventing failures caused by resource overload or inefficient workflows. In cloud environments, where usage directly impacts cost, optimization helps organizations process larger datasets while spending less.

Common Causes of Poor ETL Performance

Many pipelines become inefficient as data grows and requirements evolve. Designs that worked well with small datasets may fail to scale effectively.

A frequent issue is performing full data reloads during every pipeline run instead of processing only new or updated records. Another challenge is overly complex transformation logic, especially when operations are executed row by row rather than in batches.

Poor data quality can also slow pipelines because inconsistent or incomplete data requires additional handling. Lack of monitoring is another major problem — without performance visibility, teams struggle to identify bottlenecks or inefficiencies. Over time, these issues compound, leading to longer execution times and higher costs.

Optimizing the Extraction Phase

Improving extraction efficiency starts with reducing unnecessary data movement. Querying only the required columns and applying filters at the source can significantly decrease transfer times. Well-designed queries are especially important when working with relational databases.

Incremental extraction is one of the most effective optimization techniques. Instead of pulling the entire dataset each time, pipelines capture only changed or newly added records using timestamps or unique identifiers. This approach minimizes processing overhead and accelerates execution.

Parallel extraction is another valuable strategy. By splitting workloads across multiple threads or nodes, teams can retrieve data from large systems much faster.

Enhancing Transformation Performance

The transformation stage often consumes the most resources in an ETL pipeline. Complex joins, aggregations, and data-cleansing tasks can place heavy demands on compute power.

One powerful strategy is pushing transformations closer to the data warehouse or database engine. Modern data platforms are optimized for large-scale processing and can handle heavy operations more efficiently than external scripts.

Batch processing also improves performance by handling data in larger groups rather than individual records. Simplifying transformation logic — removing redundant calculations or unnecessary steps — further boosts efficiency. In addition, in-memory processing frameworks can speed up transformations by reducing reliance on disk operations.

Load Optimization and Efficient Data Storage

Loading data efficiently is just as important as extracting and transforming it. Bulk loading methods are typically faster than inserting rows individually because they reduce transaction overhead. Many pipelines use staging tables to load data quickly before merging it into production tables.

Database partitioning and indexing improve performance during both loading and querying. Partitioning divides large datasets into manageable segments, making operations faster and easier to maintain. However, excessive indexing during load operations can slow performance, so some teams temporarily disable indexes during large loads and rebuild them afterward.

Architectural Strategies for ETL Optimization

Beyond individual techniques, the overall architecture of a pipeline greatly influences performance. Traditional ETL models perform heavy transformations before loading data into a warehouse. Modern approaches often use ELT, where data is loaded first and transformed directly within the warehouse using powerful processing engines.

Cloud-native architectures introduce additional advantages, including auto-scaling and distributed computing. Serverless solutions allow pipelines to allocate resources dynamically based on workload demand, reducing costs during idle periods. Event-driven designs can further improve efficiency by triggering pipelines only when new data arrives rather than relying on fixed schedules.

The Importance of Automation and Monitoring

Optimization is an ongoing process, and visibility is essential for continuous improvement. Monitoring metrics such as execution time, resource consumption, throughput, and error rates helps engineers understand how pipelines perform under different conditions.

Automated alerting systems can notify teams when performance drops or failures occur. Historical performance data also provides insights that help refine scheduling and resource allocation. Some advanced platforms even use machine learning to predict bottlenecks and automatically adjust configurations.

Data Quality and Governance Considerations

Optimization is not only about speed; maintaining high data quality is equally important. Poor data quality leads to repeated processing, manual fixes, and inconsistent analytics results. Adding validation checks early in the pipeline helps prevent downstream issues.

Metadata management also supports optimization by documenting data sources, schemas, and transformation logic. Well-documented pipelines are easier to maintain and refine because engineers can quickly identify outdated processes or inefficient workflows.

Tools and Technologies That Support Optimization

Many modern data engineering tools are built with optimization features such as distributed processing, workflow orchestration, and advanced query optimization. Data warehouses provide powerful engines capable of handling large transformations, while orchestration platforms manage scheduling and task dependencies.

Selecting the right tools depends on factors like data volume, latency requirements, and existing infrastructure. Regardless of technology, the key principles remain the same: eliminate unnecessary work, leverage scalable systems, and continuously monitor performance.

Emerging Trends in ETL Optimization

As data ecosystems evolve, new trends continue to reshape ETL practices. Real-time processing is becoming more common, enabling organizations to analyze events as they occur rather than waiting for batch jobs. Artificial intelligence is also playing a growing role by enabling automated performance tuning and predictive resource management.

Another trend is the adoption of data mesh architectures, where data ownership is distributed across teams while maintaining standardized pipelines. This approach encourages modular design and reduces bottlenecks caused by centralized systems.

Conclusion

Optimizing ETL processes is essential for modern data engineering. As organizations handle larger datasets, efficient pipelines are critical for delivering timely insights while keeping infrastructure costs under control. By refining extraction, transformation, and loading stages, teams can significantly improve scalability and reliability.

Successful optimization combines technical best practices with thoughtful architecture. Techniques such as incremental loading, streamlined transformations, bulk operations, and cloud-native designs help create faster and more resilient pipelines. With continuous monitoring and automation, organizations can ensure their data workflows remain efficient and adaptable in a rapidly evolving technological landscape.